Wind Turbine Reliability Starts with Better Data

Access to generator, converter, and transformer health data is crucial in both onshore and offshore wind industries. In this blog, we explore the...

.png)

In this first installment of our blog series: Building a Reliable and Scalable Predictive Maintenance Strategy, we'll introduce two approaches that can be taken.

Unplanned downtime poses a significant challenge to efficient service, leading to costly and unsustainable operations. Predictive maintenance - offering actionable insights to anticipate and address component issues before causing downtime - has been a sought after solution. However, the perceived mystery behind data science and AI can be daunting enough to deter many service organizations from pursuing a predictive maintenance solution - and in some cases, even after they have embraced such initiatives, failing to achieve their full potential.

How can you move past AI's difficult reputation and harness it to gain real, tangible, and scalable results? The crucial aspect perhaps lies in how we capture the "intelligence."

In our next installment, we'll share the approach WindESCo is taking to tackle asset health monitoring.

Access to generator, converter, and transformer health data is crucial in both onshore and offshore wind industries. In this blog, we explore the...

Wind turbines are complex systems where failures in electrical components - such as generators, power converters, power electronics, drives and...

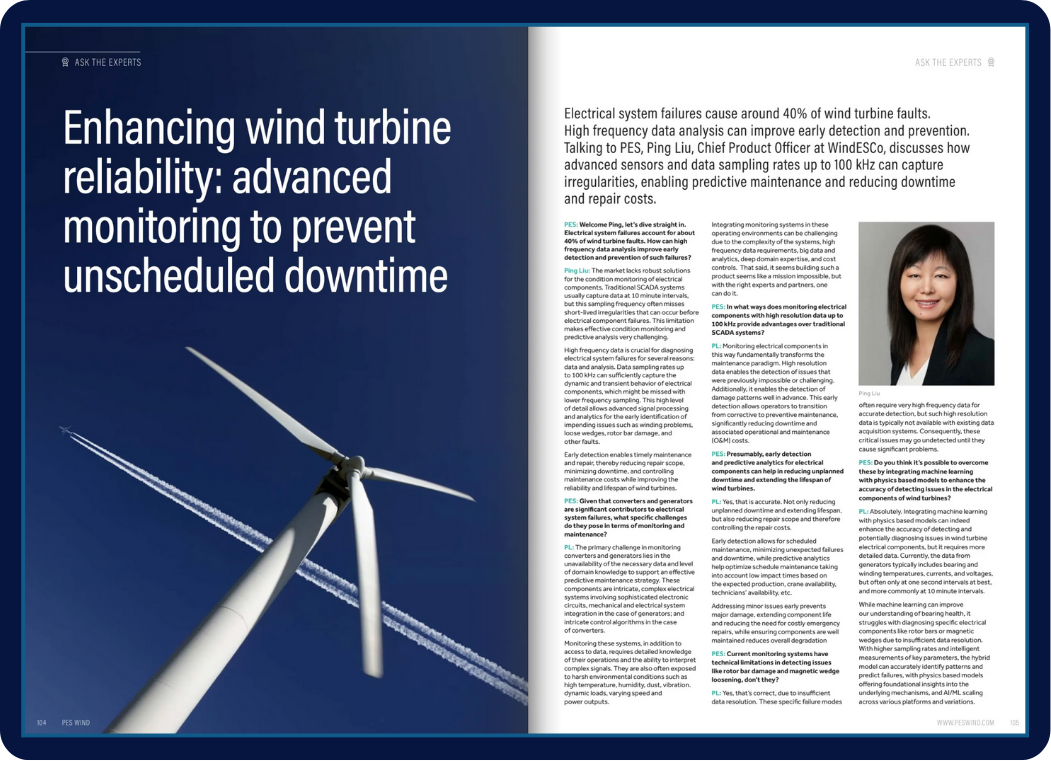

In a recent "Ask the Experts" interview with PES Wind (Issue 3, 2024), our Chief Technology Officer, Ping Liu, discusses how advanced sensors and...